Blog

March 21, 2023

Testing Apps on a Simulator vs. Emulator vs. Real Device

Automation,

Continuous Testing

To increase your mobile testing velocity, your team has probably compared testing on a simulator vs. emulator, as well as how these differ from testing on real mobile devices.

Real devices, on the one hand, come with great value and benefits but also with their own set of costs. Mobile simulators and emulators are also beneficial and can deliver unique value to both developers and testers, but also have drawbacks.

Keep reading to learn about how to compare simulators vs. emulators and real devices. Understand the key differences between emulators and simulators. And learn when to use each for testing apps.

Back to top

What is a Simulator?

Back to topA simulator is a type of virtual device that mimics the basic behavior of real iOS devices. A simulator does not necessarily follow all the rules of a real testing environment, which means you should only use simulators for select iOS testing cases.

What is an Emulator?

An emulator is a type of virtual device that mimics the behavior of a real Android device (as opposed to simulators, which are for iOS devices). An emulator operates in a virtual production environment and mimics all of an Android devices typical hardware and software features.

Experience emulators and simulators in action with your free Perfecto trial. Sign up today >>

Back to topAdvantages of Simulators and Emulators

Simulators and emulators are both virtual devices. Both solutions virtualize mobile devices and offer a number of advantages for testing mobile applications.

1. Variety

Simulators and emulators can virtualize a large variety of devices and operating system permutations. This capability allows users to validate very easily on multiple platforms as well on cases requiring specific device/OS combinations. In addition, there are platforms that are only supported (for development and testing purposes), as of now, through virtual devices.

2. Price

Virtual device solutions are cheaper than real devices by a wide margin. This applies to both local and cloud-based solutions. The need to drive testing early in the development process requires teams to scale and test more.

In large organizations, if each developer wants to run a set of validations pre-commit, this requires a large number of devices to execute on. The economics of running against real devices may not make sense at such a scale when virtual devices will allow the organization to scale and execute earlier in the process.

3. Starting on a Baseline

With virtual devices, you can always start from the same device state. Achieving this with real devices may require doing a factory reset, which can take a lot of time and effort.

The fact that the virtual device always starts from the same point helps. In many cases, it increases the reliability of automated test execution since there are no surprises. The device is always ready for execution in the way tests were designed. For example, the device will not be locked by another user.

Back to topMain Use Cases for Mobile Simulators and Emulators

Local Development and Validation

Developers and testers use simulators and emulators on their local machines for development, app debugging, and local validations. The most common IDEs for native applications come with virtual device tools as part of the basic installation.

Xcode comes with simulator functionality while Android Studio comes with an emulator. Both became stable and mature in the last few years and each has a large variety of capabilities for advanced validations.

Continuous Integration Testing

The major use case for virtual device labs is for continuous integration (CI) testing. The increased adoption of DevOps and Agile methodologies drives teams to test more in the early stages of the development process, otherwise known as shift left testing. New test automation frameworks more aligned to developers’ skills and tools, such as Espresso and XCUITest, help development teams increase their test automation coverage.

However, to run these tests you need a proper execution environment – a test cloud to run against. These automated tests can run either in the pre-commit phase, executing a set of tests for fast validation prior to committing or merging code, or triggered through the CI a few times a day, providing quick value to development teams on recent code changes.

Back to top4 Advantages of Using a Real Mobile Device

1. There’s Nothing Like the Real Thing

While virtual devices are good for basic validations, to really validate that an application works, you need to run tests against real devices. While virtual devices can be very helpful to validate functional flows, there may be cases of false positives. This means that while tests may pass, in reality, there may be issues with the application.

That’s because simulators and emulators test on the “happy path” — the main flow of the application rather than trying to see how the application handles negative and extreme cases.

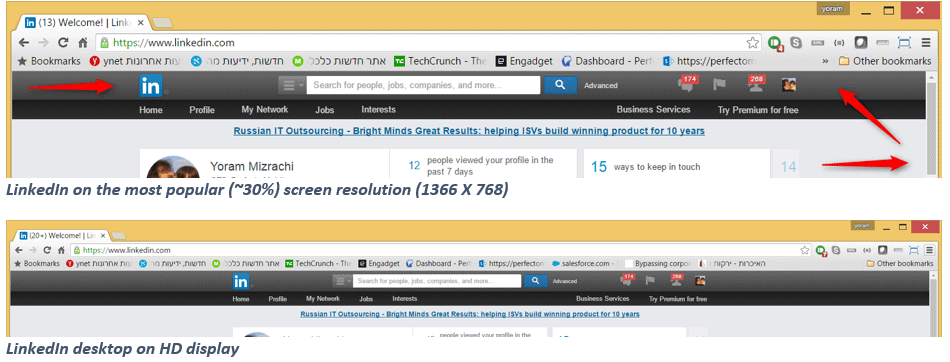

2. Better User Interface (UI) Validations

User interface validation should be done on real devices to validate the accuracy of the UI. In addition, usability issues are very easy to find while working on a real device, unlike virtual devices.

In most cases, where there is a need to enter input from the keyboard, real devices overlay the app, unlike virtual devices where the keyboard is presented next to the device interface.

3. More Accurate Performance Testing

Real devices provide more accurate and reliable performance measurements on transaction times. In addition to the implications specific hardware has on performance, virtual devices also render the UI differently.

4. Improved Hardware & Sensor-Related Validations

Common use cases that can be virtualized are ones that require interaction with device hardware and sensors, such as the camera, accelerometer, and biometrics. In some cases, the behavior of real and virtual devices may differ.

Related eBook: Mobile App Testing Strategy Combining Virtual & Real Devices

Back to topBalancing Simulators vs. Emulators vs. Real Devices

Since test activities happen from early development stages all the way to deployment and monitoring of the application, there is a need for both types of platforms, real and virtual.

Below is a proposal on how to build the right mix for each one of the phases in the application lifecycle.

Code Phase

Local Validation

Developers should validate code during the development phase, either with a local device or virtual device, which in most cases are part of the developer’s IDE. In the case of UI validation, it is recommended you validate the new UI on real devices rather than virtual ones to make sure that the outcome looks as expected.

Pre-Commit Validation

Execution on unit and UI unit tests should run, in most cases, against virtual devices (90%). In addition, there are cases where the developers make changes in components which, based on their knowledge and experience, will behave differently on real devices. In those cases, it is recommended you give developers on-demand tools to select which tests they want to run and which platform to run against — either virtual or real.

Build Phase

Depending on the team’s maturity, teams will run the CI process one or more times a day. The goal is to build a mix that allows scalability while providing good test coverage and insights on end user experience. To allow scale of execution and parallel validation for multiple developers and teams at the same time, a typical CI mix might look like this.

Commit Job

This will run for every commit a developer makes, executing a basic sanity check to make sure the change didn’t break the build or introduce a significant regression. Due to the need to run this job at scale in every commit, these tests should run on a virtual device.

Multi-Merge Validation

These jobs validate the last X commits and tests if one of them broke the build or introduced a regression. Usually, this type of job will run every few hours/X number of code commits. This job runs more tests to provide larger coverage. Here, we start to introduce real devices into the CI — the mix should be 70% virtual devices and 30% real devices.

Night Job

This job runs regression tests and extends coverage. In this case, tests will run against real devices.

Test Phase

Additional test activities that run outside the CI process will run on real devices. Here, the goal is to validate the complete end-user experience, functional flow coverage, UI validation, and performance testing. All tests (100%) should run on real devices.

Monitoring Phase

While there are organizations that have Real User Monitoring (RUM) solutions that run on real devices, there is also value in synthetic monitoring: execution of single-use automated tests that measure app performance running every 10-15 minutes. In this case, to get the most accurate results, the best practice is to use real devices.

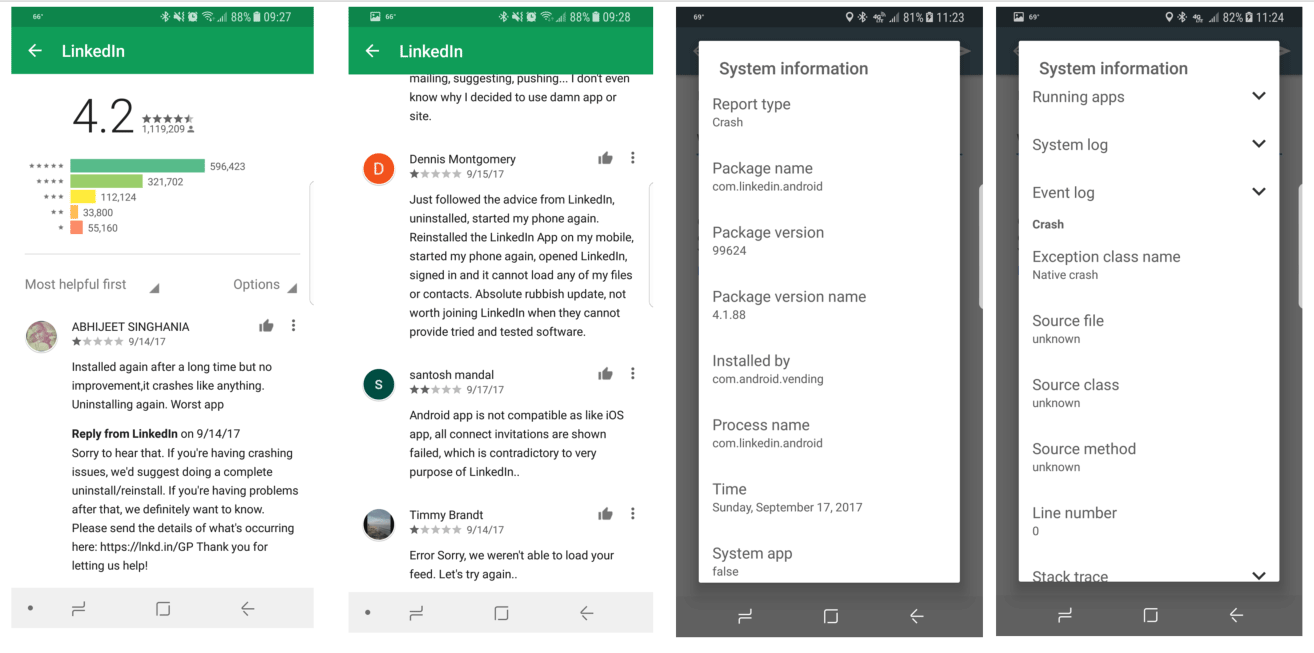

A LinkedIn Case Study

LinkedIn (acquired by Microsoft) is now serving more than half a billion users across multiple platforms like web and mobile.

LinkedIn used to suffer from poor quality, stability issues, and bad reviews. While trying to solve the quality issues, as well as keep growing, LinkedIn announced project Voyager. This is a new release model and SDLC strategy that is aimed to improve both quality as well as release velocity for the organization.

LinkedIn shifted to an admirable 3x3 release strategy. They are now able to push a new version to production 3 times a day, every 3 hours from developer code commits.

Release Cadence Improved — But User Experience Declined

The release cadence improved. This allowed the LinkedIn product team to quickly adapt to changes, fix bugs faster, and innovate. But the end-user experience somehow dropped. And many of the mobile users are vocal about it in the app stores, stating that they would rather use the desktop browser instead of the mobile app.

LinkedIn ran their test plan for the entire test scope on 16 emulators in parallel. There was 0 coverage on real devices.

This led to many escaped defects to production that impact real-device users.

Here's what happened:

- The app crashes on Android devices when switching from Wi-Fi to real carrier networks.

- Invites from mobile to various connections did not work properly.

- There were syncing issues between the LinkedIn feed and what’s shown on the browser version.

- There were installation issues on real devices.

- And more.

Their Test Strategy Needs to Change

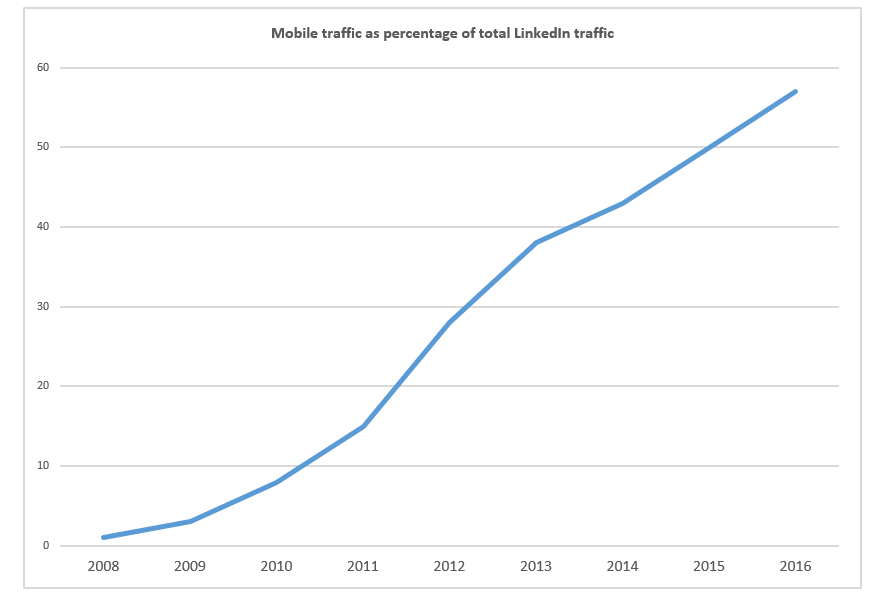

The majority of traffic to the LinkedIn app is coming from mobile devices. So, LinkedIn testing strategy should be tuned accordingly.

LinkedIn needs to base their mobile testing strategy on real life personas operating from different geographic locations, varying conditions, background apps, and more.

Testing on emulators is essential and should be kept as part of the strategy. But it cannot be the only platform for testing this app. It does not guarantee continuous quality and UX.

Back to topBottom Line

The key to implementing continuous testing and maximizing velocity in the mobile space is to have the perfect balance between simulators, emulators, and real devices. Each of these extremely different platforms brings unique values and benefits to the developers and testers. However, these values are maximized when used in the right phase of the development life cycle.

Ensure that you properly plan the test coverage, the platform under test, and the testing tools throughout your continuous testing activities and continuously monitor the mobile space since new devices, as well as new versions of simulators and emulators, are released constantly to the market.

Reap the benefits of testing on simulators, emulators, and real devices with Perfecto. You’ll get the ideal mix of devices in the cloud for your testing strategy, as well as:

- Real Macs and Windows VMs to test web apps.

- Unshakeable enterprise-grade security.

- Extended test coverage.

- Elastic scaling of your tests across platforms.

- 24/7, global access to the cloud.

- Robust, built-in test reporting and analytics.

See for yourself. Get a custom demo of Perfecto today.